Artificial Intelligence (AI) is no longer a future concept – it’s a present reality, reshaping how businesses operate, make decisions and deliver value.

From optimizing product portfolios, financial processes to unlocking insights from complex data sets, AI is rapidly becoming a core driver of innovation and competitive advantage across industries.

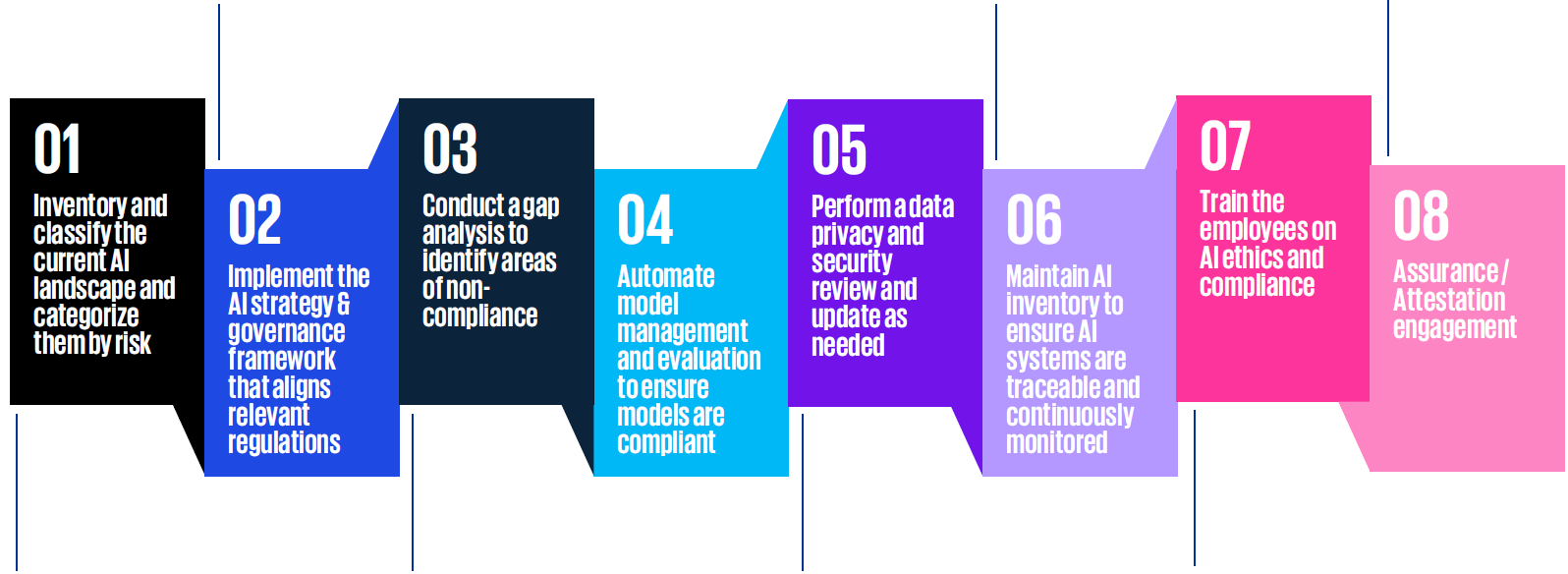

However, as AI becomes embedded in products and business-critical processes and decision-making, organizations must move beyond experimentation and focus on reliability and trust. The rapid deployment of AI applications has exposed organizations to new forms of operational, ethical and regulatory risk. As a result, key priorities include combating AI bias, improving model transparency and complying with regulations and standards such as the EU AI Act or ISO 42001:2023.

To stay resilient and competitive in the long term, organizations must ensure that AI‑enabled solutions/products and processes are reliable, controllable and auditable. Without strong AI risk management and assurance, the benefits of AI can quickly be undermined by loss of trust, regulatory challenges and reputational damage.